What Does “10x” Actually Mean for Startups in 2026? Everyone in tech is saying some version of “AI makes you 10x.” VCs say it on panels. Founders say it in pitch decks. People selling $49/month productivity tools say it loudest of all.

Nobody stops to ask: 10x what, exactly?

I keep hearing three different claims, usually mashed together as though they’re the same thing. Try 10x more ideas. Start 10 companies instead of one. Or do one idea 10x faster. They sound similar. They are not. And the wrong interpretation will kill you just as quickly as the right one might save you.

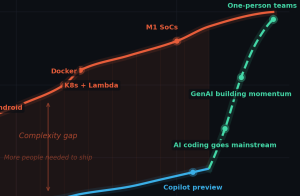

The complexity gap is closing

In the early 1980s, one person could make a complete commercial product. Jeff Minter wrote Attack of the Mutant Camels alone on a Commodore 64. Matthew Smith built Manic Miner by himself on a ZX Spectrum in eight weeks. Andrew Braybrook made Paradroid – one of the best games of the era – solo. One person could hold the entire system in their head: hardware, tools, game logic, graphics, sound, distribution. The whole stack.

I’ve been tracking what happened next – something I call the complexity gap, the widening space between what platforms demand and what a single developer can ship.

The short version: we went from “one person, one PC, Turbo Pascal” to distributed systems, Kubernetes, mobile, cloud, and everything-as-a-service. The complexity curve went near-vertical. Developer productivity didn’t keep up. The gap got filled with headcount.

That gap peaked around 2020. Then Copilot, ChatGPT, Cursor, Claude Code, Devin, Gemini and others showed up, and per-developer shipping power started climbing at a rate we haven’t seen since the early PC era.

The numbers are getting hard to argue with. Stripe’s 2025 annual report shows GitHub pushes up 41% year-on-year and double the number of companies reaching $10M ARR within three months of launch versus 2024. Claude Code went from zero to $2.5 billion in ARR before its first birthday. SemiAnalysis estimates it already accounts for 4% of public GitHub commits, trending toward 20% by year end.

The anecdote that shook me the most: Cloudflare recently rewrote their Next.js integration in one week, with one engineer. Previous attempts had taken months and several teams. Sure, it’s experimental and untested at scale, but it is already making the point – the construction is no longer the hard part. AI assisted development is no longer just a productivity improvement. It’s a category change.

So the complexity gap is closing. The question is what opens up behind it.

I’ve seen this film before

I keep thinking about the budget PC games market in the early 2000s, because I lived through that transition and the pattern is uncomfortably familiar.

There was a real business in shipping budget titles into physical retail. Shelf space was the gate – retailers like Walmart controlled roughly 25-30% of US game sales. Publishers shipped “good enough” product into that channel and made decent margins on volume.

Then browser-based distribution showed up. Flash portals. Casual game sites. By 2007, something like 200 million people were playing casual games online each month. The supply of “good enough” exploded, distribution friction vanished, and the price floor fell through.

The interesting bit wasn’t “games became free.” It was subtler. A big middle market – paid but undifferentiated – got hollowed out. Value migrated upward into distribution, aggregation, monetisation mechanics, and brands. Content creation didn’t disappear. It just stopped being scarce.

I think we’re watching the same thing happen to the PoC and MVP end of software now. A proof-of-concept used to be a sellable unit: bounded, delivered under time pressure, with just enough polish to convince stakeholders. A lot of consulting revenue lived in that gap between “idea” and “demoable thing.”

That gap is collapsing. Agentic coding reduces the cost of generating convincing software-shaped evidence. And just like the games market, you get both sides of the squeeze: clients can generate more PoCs internally, so the pricing floor drops – but clients also become more sceptical of PoCs, because they’ve seen too many demos that never survived contact with production. The cost of producing a credible artifact is dropping faster than the cost of trusting it.

The verification gap

Here’s where I think the whole “10x” conversation goes wrong.

Most of the industry is treating agentic AI as a developer-tooling story – better autocomplete, smarter suggestions, faster first drafts. The assumption is that the existing review process will catch whatever AI gets wrong.

I build automotive AR systems. In my world, unverified code isn’t technical debt – it’s a liability. So the number that matters to me isn’t how much code AI is writing. It’s how little of the verification problem anyone is talking about.

The volume numbers are real. A recent Science paper estimated that by end of 2024, AI was responsible for roughly 29% of Python functions from US contributors, up from about 5% in 2022. Sundar Pichai said more than a quarter of new code at Google is AI-generated. These aren’t apples-to-apples metrics, but they point the same way.

The quality signals should worry anyone shipping to production. GitClear’s 2025 report, based on 211 million changed lines, found developers increasingly duplicating code blocks rather than refactoring – consistent with AI making it faster to generate a copy than to restructure what exists. Stack Overflow’s 2025 survey: 84% of developers using or planning to use AI tools, but only 33% trust the accuracy of the output. Adoption is outrunning trust.

In automotive, aerospace, medical devices – anywhere a defect has physical consequences – you don’t ship on the basis of “a senior dev looked at the PR.” You need traceable requirements, validated test coverage, documented provenance. The question isn’t “did someone review it?” It’s “can you prove, to an auditor, that this change was verified against a specific requirement and tested under defined conditions?”

AI-generated code doesn’t come with any of that for free. And the current metrics – lines generated, tasks completed, commits tagged – tell you nothing about whether the output is maintainable, testable, or safe to deploy.

So the complexity gap is closing – but a verification gap is opening right behind it. And that gap is where the real value will concentrate.

What 10x actually means

The three popular interpretations of 10x all miss this.

10x more ideas is a trap. An OECD study from 2025 found AI-aided ideation produces less diverse output – it converges on obvious solutions and narrows the search space. More ideas that look the same is not a strategy.

10 companies instead of one is a fantasy. Agents reduce the cost of starting. They don’t remove the cost of being accountable – security reviews, reliability, compliance, and the emotional burden of decisions that matter. Those don’t parallelise.

One idea, 10x faster is closest to true, but dangerously easy to do wrong. Speed multiplies mistakes unless you also multiply evaluation.

Here’s my actual answer: the cost of being wrong dropped 10x. But the cost of proving you’re right didn’t.

That asymmetry is the story of 2026. Prototyping is cheap. Verification is not. And the teams that benefit from AI-generated code will be the ones building the governance layer: review gates calibrated to AI’s specific failure modes, test coverage that distinguishes what the model wrote from what a human wrote, provenance metadata that survives from generation to deployment.

Not because governance is exciting. Because without it, you’re converting drafting speed into downstream rework – or worse, into production incidents with your name on them.

What this means for the builder

If you’re a founder or a builder, “what does 10x mean for me?” is really asking: what is my job now? The tools just changed. If your job description hasn’t changed with them, you’re probably automating the wrong half of your day.

AI is very good at the work that used to fill your calendar but never made or broke the company. It’s not good at choosing which problem to solve, or earning trust, or knowing when to kill something that’s easy to build but wrong to ship. The biggest mistake in 2026 will be getting all that time back and pouring it straight into more building.

The future advantage isn’t “more code faster.” It’s “more verified change with less downstream rework.” That’s an engineering discipline problem, not a tooling problem. And at the end of the day the tools don’t care which one you think you’re solving – nobody’s production incident report ever started with “we didn’t generate enough code.”